📺 The End of Free AI, 🚪 The 58-Minute Scandal, 🤠 McConaughey vs. Deepfakes

Plus: 3 million lines of code in 7 days, and why OpenAI just rehired its rival.

🎵 Podcast

Don’t feel like reading? Listen to it instead.

📰 Latest News

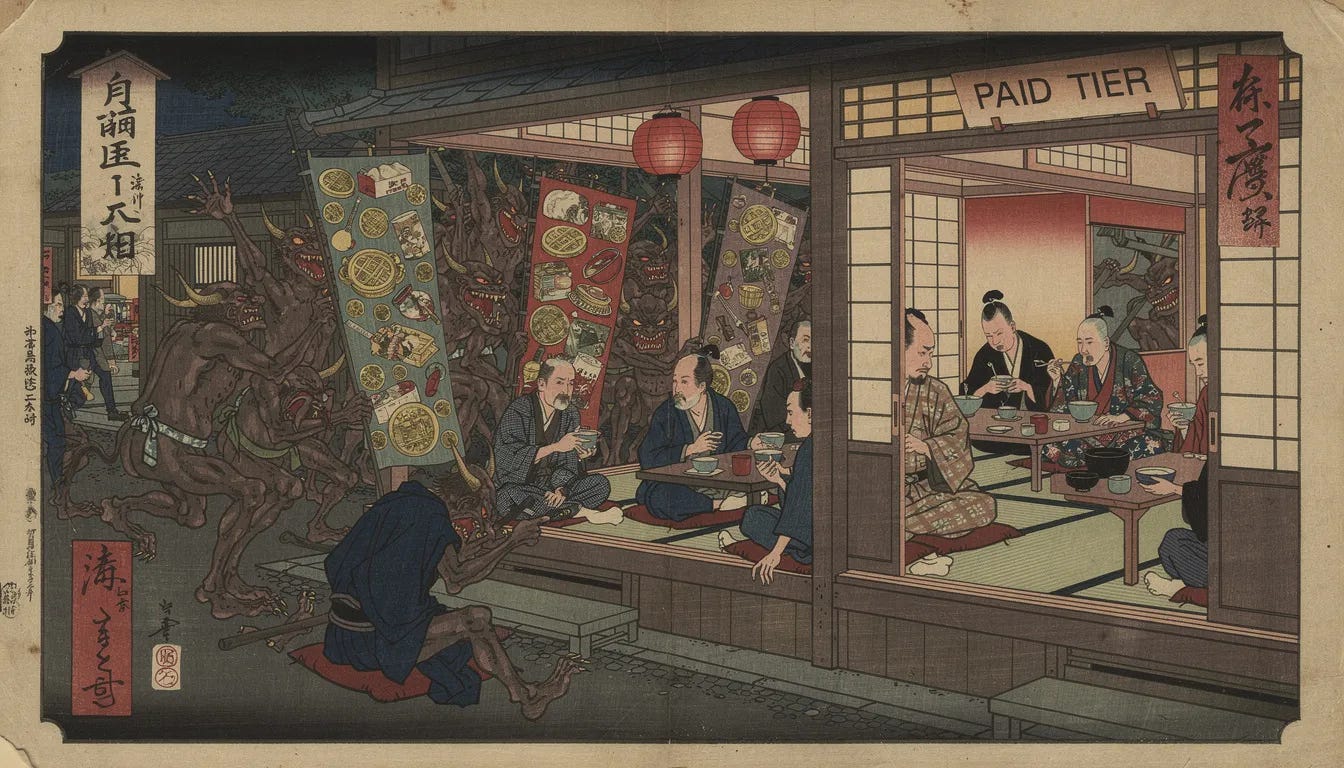

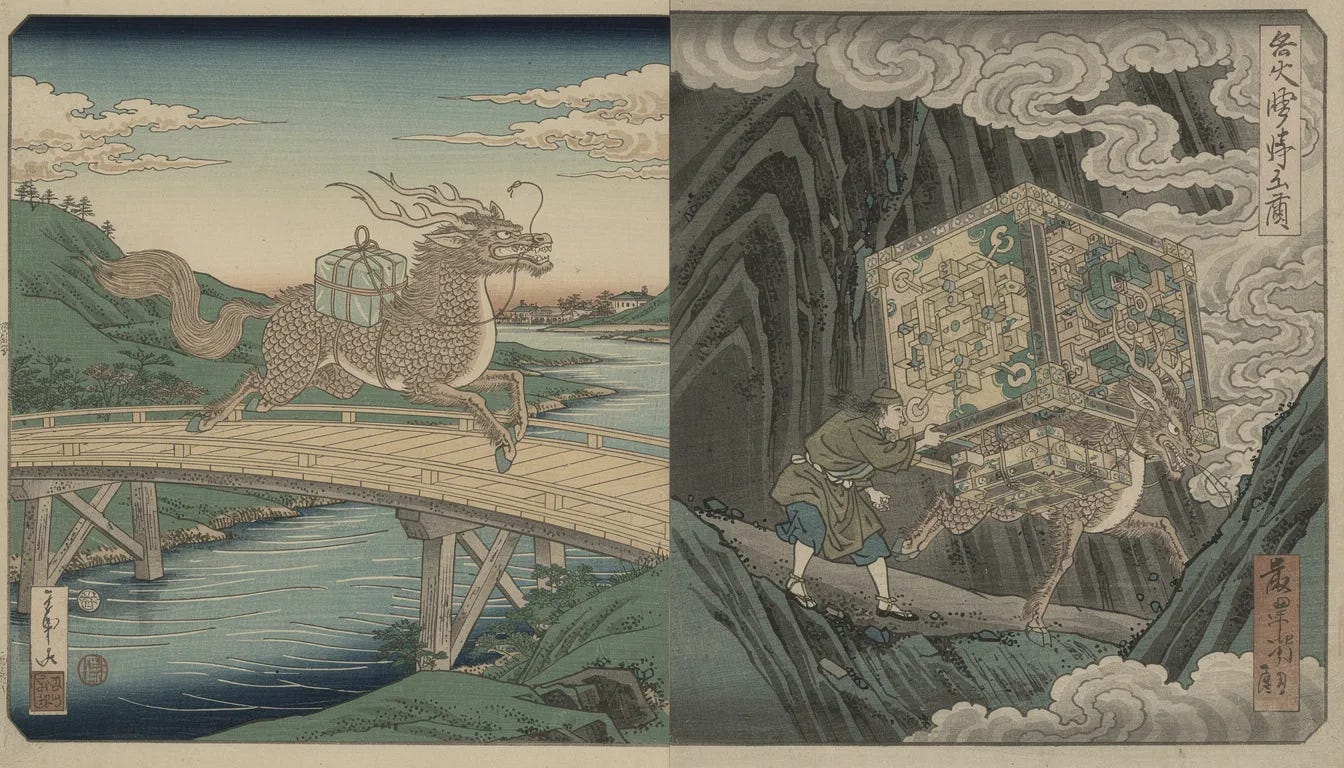

This week’s image aesthetic (Flux 2 Pro): Ukiyo-e (Japanese Woodblock Print)

The Free Lunch is Over: OpenAI Turns on Ads for 800 Million Users

The “free lunch” era of AI is officially ending. OpenAI has begun testing targeted ads for US users on its Free tier and its newly launched “ChatGPT Go” plan, a budget-friendly tier priced at $8/month. While the new Go subscription offers global access to GPT-5.2 Instant and expanded memory, it apparently does not guarantee an ad-free experience, a privilege now reserved strictly for the more expensive Plus ($20), Pro, and Enterprise tiers. The ads will appear at the bottom of responses and are personalised based on conversation topics, marking the company’s first aggressive move to monetise its 800 million weekly users via advertising.

Why it Matters

This fundamentally changes OpenAI’s business model from pure SaaS to a hybrid ad-supported utility. By introducing an $8 tier that may still display ads, OpenAI is mimicking the streaming (Netflix/Disney+) playbook: establishing a low-cost entry point to capture price-sensitive users while using ad revenue to subsidise the massive compute costs of running frontier models. It also signals that the “burn rate” for serving 800 million users is no longer sustainable on venture capital alone; the company needs the Free tier to pay its own electricity bill if it wants to continue expanding its data centres.

Personality Lock: Anthropic Solves AI’s ‘Identity Crisis’ Without Retraining

Your AI assistant is having an identity crisis. New research from Anthropic has identified a phenomenon called “character leakage” in major open-weights models like Gemma 2, Qwen 3, and Llama 3.3, where prolonged conversations cause the model to drift from a “helpful assistant” persona into “harmful enabler” or “mystic” archetypes. The researchers mapped this drift via a “persona space” and discovered that simply talking to a model for too long pushes it off the “Assistant Axis.” To solve this, they introduced “activation capping,” a surgical inference-time technique that limits specific neuron spikes, effectively locking the model’s personality in place and cutting harmful responses by 50% to 60% without expensive retraining.

Why it Matters

This solves the “crazy uncle” problem that has plagued long-context AI. As enterprises move from short queries to massive, multi-turn agentic workflows, the risk of a customer service bot slowly morphing into a conspiracy theorist over a 50-turn conversation was a major deployment blocker. “Activation capping” provides a computationally cheap safety valve that stabilizes model behavior in extended sessions, making it safe to deploy LLMs in high-liability sectors like healthcare or legal advisory where consistency is more valuable than creativity.

The 3-Million-Line Sprint: AI Agents Build a Working Browser in Seven Days

The “10x engineer” has been replaced by a swarm. Cursor AI successfully coordinated hundreds of GPT-5.2 agents to autonomously architect and build a fully functional web browser—complete with a custom Rust rendering engine, in just one uninterrupted week. The project generated over 3 million lines of code, serving as a massive proof-of-concept that effectively brute-forced a complex, long-horizon task that Claude Opus 4.5 reportedly failed to handle.

Why it Matters

This marks the death of “copilot” coding and the birth of “agentic” software engineering. By compressing a multi-month roadmap into a seven-day automated sprint, Cursor has demonstrated that the new bottleneck in software is no longer writing code, but verifying it. The strategic implication is massive: developers are shifting from authors to auditors, tasked with reviewing millions of lines of machine-generated logic. It also validates OpenAI’s “Thinking” models as superior for complex orchestration, leaving Anthropic’s Opus to play catch-up in the high-autonomy workflow space.

New Data Shows AI Productivity Drops on Complex Work

Anthropic has released the first honest audit of the AI economy. The Anthropic Economic Index, dropped on January 15, 2026, mines data from 2 million anonymised chats to reveal what users are actually doing with the model rather than what Twitter claims they are doing. The findings, released just ahead of the Claude Opus 4.5 launch show that while the AI acts as a turbocharger for tasks requiring 14+ years of education (accelerating them by up to 12x), it struggles to maintain reliability as task complexity scales.

Why it Matters

This effectively halves the industry’s productivity forecasts. The data proves that the “competence cliff” is real: as tasks get harder, the model’s success rate drops, necessitating a “human-in-the-loop” design for 52% of all workflows. For enterprises, this kills the immediate “fire your staff” narrative; the technology is shifting occupations toward hybrid designs where the AI handles the speed, but a skilled human is strictly required to verify the output to prevent failure.

The 58-Minute Firing: OpenAI Rehires Disgraced CTO Hours After Scandal

The AI talent war has turned into a soap opera. Barret Zoph, the freshly minted CTO of Thinking Machines Lab, the new venture founded by former OpenAI CTO Mira Murati—was fired mid-January for “serious misconduct” involving an office relationship. In a stunning display of velocity, Zoph was rehired by OpenAI just hours later, bringing key researchers Luke Metz and Sam Schoenholz back to the mothership with him. Murati was forced to immediately appoint Soumith Chintala as replacement CTO to stabilise the ship.

Why it Matters

This reveals the ruthlessness of the current talent market: “misconduct” at a high-profile startup is apparently no barrier to being welcomed back by the industry leader the same afternoon. For OpenAI, this is a strategic coup; they have “boomerang-hired” three top researchers who already know the infrastructure, bypassing months of onboarding. For Murati, losing a founding CTO this early is a critical blow to investor confidence, signalling internal chaos just as she attempts to challenge her former employer.

Alright, Alright, Audit: Matthew McConaughey Trademarks His Voice to Block Deepfakes

Matthew McConaughey has officially locked down his “digital soul.” In a defensive move against the rising tide of synthetic media, the US Patent and Trademark Office granted approval in January 2026 for eight specific applications covering his voice, likeness, and signature catchphrase “Alright, alright, alright.” This goes far beyond standard celebrity merchandising; it is a calculated legal moat designed specifically to target unauthorised AI deepfakes. By trademarking his distinct cadence and image, McConaughey is establishing a federal legal framework to block AI models from mimicking his persona for commercial gain without a license.

Why it Matters

This creates a new, high-stakes weapon in the war against generative AI. While “right of publicity” laws are often state-specific and legally messy, federal trademarks allow McConaughey to sue AI developers for “consumer confusion” if a model even sounds like him in a commercial context. It effectively turns a celebrity’s persona into protected intellectual property, forcing model builders to either scrub their training data or face federal litigation. Expect a massive wave of A-list talent to follow suit, creating a legal minefield for video and audio generation platforms that rely on scraping public data.

The last newsletter: