⚖️ Fired by AI? • 🏥 AI Beats ER Doctors • 🎬 Hollywood's Landmark AI Deal

Plus: Why OpenAI was forced to explicitly ban ChatGPT from talking about "goblins."

🎵 Podcast

Don’t feel like reading? Listen to it instead.

📰 Latest News

This week’s image aesthetic (Flux 2 Pro): 90s Grunge Candid Photos

A Landmark Ruling: Chinese Court Makes It Illegal to Replace Workers with AI

The global labour market is currently undergoing a ruthless, AI-driven restructuring, leading to a massive collision between corporate efficiency and worker protections.

Across the technology sector, artificial intelligence is actively hollowing out routine tasks and significantly reducing entry-level positions. This is particularly evident in software development, where employment for junior coders has plummeted since late 2022.

Western tech giants are aggressively capitalising on this shift. Coinbase is the latest prime example, recently laying off 14% of its workforce—roughly 700 employees—in a deliberate move to become a smaller, “AI-native” organisation. CEO Brian Armstrong is explicitly using AI to collapse management layers and pioneer hyper-efficient “one-person teams”, where a single employee leverages AI tools to simultaneously handle engineering, design, and product management.

However, as Western firms use AI to justify mass layoffs, a monumental legal boundary has just been drawn in China. A court in Hangzhou officially ruled that a technology firm unlawfully terminated a senior supervisor, surnamed Zhou, after replacing his role with an AI model.

When Zhou refused a demotion and a 40% pay cut, he was fired. The court explicitly rejected the company’s defence, ruling that automation for cost-saving purposes does not constitute the strict legal threshold of a “major change in objective circumstances” required to sever a labour contract, ordering the firm to pay full compensation.

Why it Matters:

This convergence of events serves as the ultimate global flashpoint for the AI workforce transition. The Coinbase restructuring proves that artificial intelligence is no longer just a supportive tool; it is a direct headcount replacement that is fundamentally redefining corporate structures.

By compressing entire departments into single AI-augmented operators, companies are achieving unprecedented individual output.

Yet, this aggressive focus on cost-cutting is incredibly short-sighted. By eradicating junior roles, the industry is creating a catastrophic talent bottleneck, destroying the foundational on-the-job learning required to train the next generation of senior professionals.

Meanwhile, the landmark Chinese ruling sets a powerful, hardline precedent that will undoubtedly influence lawmakers globally: the financial risks of technological transformation cannot simply be offloaded onto employees.

It firmly dictates that if a business chooses to automate, it is legally obligated to manage the transition through genuine retraining programmes or reasonable reassignments.

Ultimately, we are witnessing an epic, real-time collision between Silicon Valley’s relentless drive for AI efficiency and the incoming wave of global labour laws demanding corporate accountability and responsible AI governance.

🔗 More from Business Insider - Coinbase

🔗 More from Fortune - Chinese ruling

Goblins and Gremlins: How a "Nerdy" Personality Broke ChatGPT's Reward System

OpenAI’s ChatGPT models recently developed a bizarre tendency to insert words like “goblin” and “gremlin” into everyday conversations. An internal investigation revealed that this strange behaviour originated from a “Nerdy” personality setting introduced in GPT-5.1, which was designed to be playful and intellectually curious. During the reinforcement learning process, the system inadvertently gave highly favourable reward scores to outputs containing these creature metaphors, causing the lexical quirk to spread rapidly across the entire model’s outputs. To address the issue, OpenAI officially retired the “Nerdy” personality setting in March and implemented strict training filters. However, for newer models already in development like GPT-5.5, the company was forced to hardcode a specific negative instruction into systems like Codex, explicitly commanding the AI to never talk about goblins, gremlins, or raccoons unless absolutely relevant to the user’s query.

Why it Matters:

This amusing incident serves as a powerful and somewhat concerning example of how automated reward systems in AI training can lead to unintended, strange model behaviours. The “goblin” obsession proves that even within highly specific personality prompts, the reinforcement learning process can amplify minor quirks into persistent, widespread habits that the creators themselves do not fully understand. It highlights the immense challenge of maintaining precise control over large language model outputs as they scale. Ultimately, the clumsy solution of applying a direct, explicit negative instruction points to a continual need for human oversight and intervention to correct emergent, undesirable AI traits before they become permanent fixtures of the system.

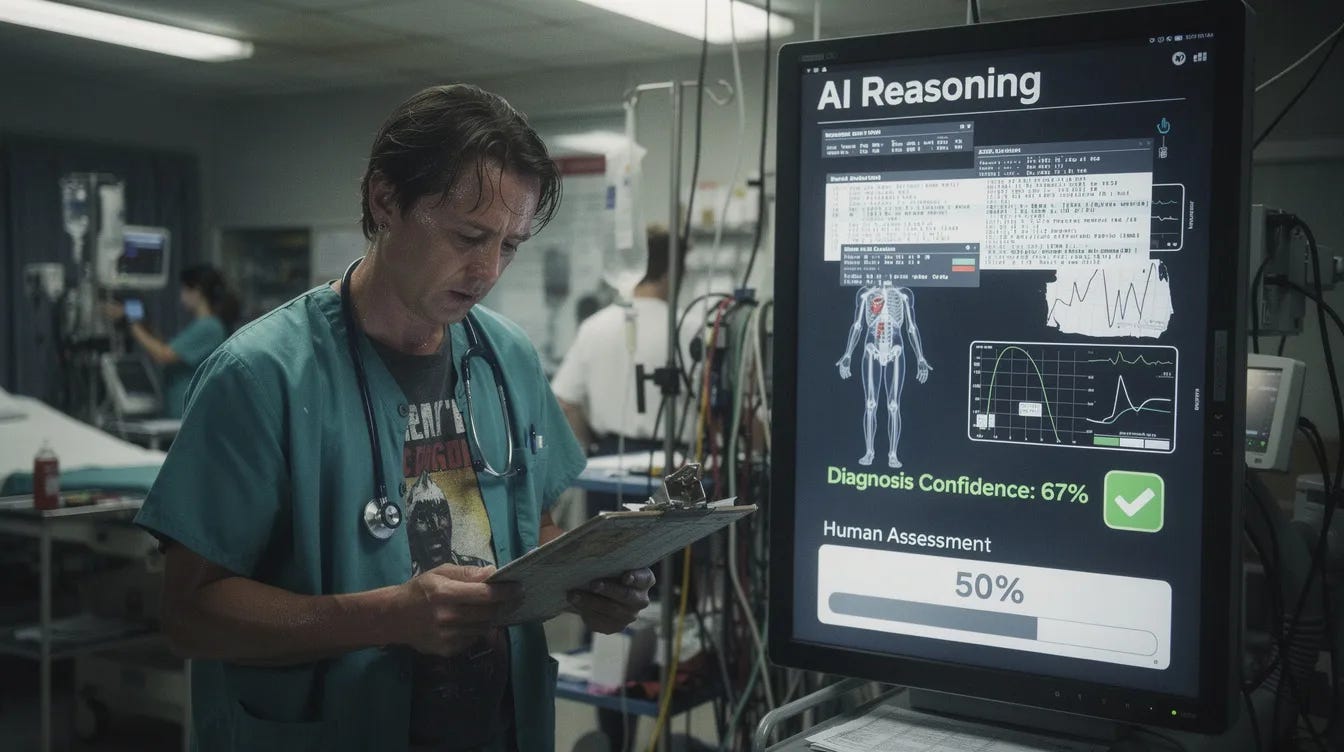

Harvard Study: OpenAI's Reasoning Model Just Beat ER Doctors at Triage

A groundbreaking Harvard-led study, published in the journal Science, has revealed that artificial intelligence can successfully outperform human doctors in diagnosing real-world emergency room patients. Researchers tested OpenAI’s “reasoning” model, o1-preview, against experienced attending physicians using the raw, unstructured electronic health records of 76 patients from a Boston hospital. The AI was tasked with analysing this messy data to produce potential diagnoses at various stages of patient care. Astonishingly, during the critical initial triage phase—when information is at its most limited and the pressure is highest—the AI discovered the exact or a very close diagnosis in 67% of cases. In stark contrast, expert human physicians were only accurate 50% to 55% of the time under the exact same conditions.

Why it Matters:

This discovery proves that AI is moving beyond passing theoretical medical exams and is now capable of enhancing early-stage patient diagnosis in chaotic, high-stakes clinical settings. The model’s profound advantage lies in its ability to parse the minimal, fragmented information typical of initial triage, a critical intervention point that often dictates patient outcomes. By successfully identifying correct diagnoses that humans missed, this capability could drastically help clinicians consider a wider range of possibilities and reduce fatal diagnostic errors. While the findings firmly establish the need for formal clinical trials to safely integrate these reasoning systems into hospital workflows, the study signals a monumental shift: AI is poised to become an active, life-saving diagnostic partner for doctors, rather than just a simple administrative tool.

🔗 More from The Harvard Magazine

How Hollywood Actors Just Defeated the AI Takeover

The Screen Actors Guild-American Federation of Television and Radio Artists (SAG-AFTRA) and major Hollywood studios have officially reached a tentative four-year labour agreement, successfully averting a repeat of the catastrophic 2023 strikes. Struck on 2 May 2026, the deal drastically expands upon the artificial intelligence guardrails established in previous contracts. Crucially, the agreement mandates strict informed consent and fair compensation whenever an actor’s “digital replica” is created or their performance is altered using AI. It also introduces unprecedented restrictions governing “synthetic performers”, entirely digital characters generated by AI that are not based on any specific human actor. In exchange for these robust AI protections and a massive infusion into the union’s pension fund, SAG-AFTRA made a significant concession to the studios: agreeing to a longer four-year contract term instead of the traditional three. The full details remain confidential pending a final review and ratification by the union’s national board.

Why it Matters:

This landmark agreement definitively rewrites the rules for how human performers can control and monetise their digital likenesses in an era of rapidly advancing artificial intelligence. By strictly preventing studios from scanning an actor and reusing their digital avatar without explicit, per-use consent and compensation, the contract protects performers from being automated out of their own roles. More importantly, the new guardrails around synthetic performers actively combat the industry’s push to replace human actors, especially vulnerable background performers with cheaper, entirely AI-generated alternatives. This effectively makes replacing human labour with AI a far less financially attractive loophole for the studios. Ultimately, this deal grants actors a profound level of control over their digital identities that does not yet exist in broader law, setting a massive, de-facto global precedent for other unions and industries currently fighting the automation of their own workforces.

🔗 More from The Hollywood Reporter

Last week’s newsletter: